本文所整理的技巧与以前整理过10个Pandas的常用技巧不同,你可能并不会经常的使用它,但是有时候当你遇到一些非常棘手的问题时,这些技巧可以帮你快速解决一些不常见的问题。

1、Categorical类型

# raw data +----------+------------------------+ | class | filename | +----------+------------------------+ | Bathroom | Bathroom\bath_1.jpg | | Bathroom | Bathroom\bath_10.jpg | | Bathroom | Bathroom\bath_1003.jpg | | Bathroom | Bathroom\bath_1004.jpg | | Bathroom | Bathroom\bath_1005.jpg | +----------+------------------------+

# target +------------------------+------------------------+----------------------------+ | anchor | positive | negative | +------------------------+------------------------+----------------------------+ | Bathroom\bath_1.jpg | Bathroom\bath_10.jpg | Dinning\din_540.jpg | | Bathroom\bath_100.jpg | Bathroom\bath_1003.jpg | Dinning\din_1593.jpg | | Bathroom\bath_1003.jpg | Bathroom\bath_1004.jpg | Bedroom\bed_329.jpg | | Bathroom\bath_1004.jpg | Bathroom\bath_1005.jpg | Livingroom\living_1030.jpg | | Bathroom\bath_1005.jpg | Bathroom\bath_1007.jpg | Bedroom\bed_1240.jpg | +------------------------+------------------------+----------------------------+

triplets.info(memory_usage="deep")

# Column Non-Null Count Dtype # --- ------ -------------- ----- # 0 anchor 525000 non-null category # 1 positive 525000 non-null category # 2 negative 525000 non-null category # d*: category(3) # memory usage: 4.6 MB

# without categories triplets_raw.info(memory_usage="deep")

# Column Non-Null Count Dtype # --- ------ -------------- ----- # 0 anchor 525000 non-null object # 1 positive 525000 non-null object # 2 negative 525000 non-null object # d*: object(3) # memory usage: 118.1 MB2、行列转换

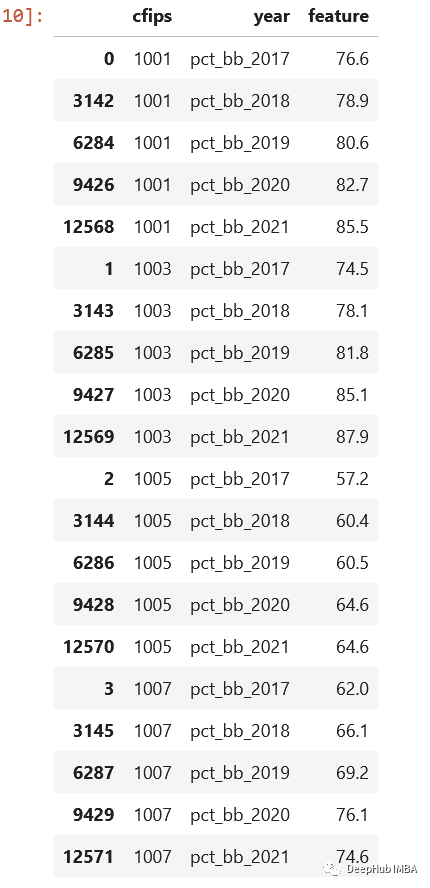

cols = sorted([col for col in original_df.columns \ if col.startswith("pct_bb")]) df = original_df[(["cfips"] + cols)] df = df.melt(id_vars="cfips", value_vars=cols, var_name="year", value_name="feature").sort_values(by=["cfips", "year"])

3、apply()很慢

import pandas as pd import swifter

def target_function(row): return row * 10

def traditional_way(data): data['out'] = data['in'].apply(target_function)

def swifter_way(data): data['out'] = data['in'].swifter.apply(target_function)

import pandas as pd from pandarallel import pandarallel

def target_function(row): return row * 10

def traditional_way(data): data['out'] = data['in'].apply(target_function)

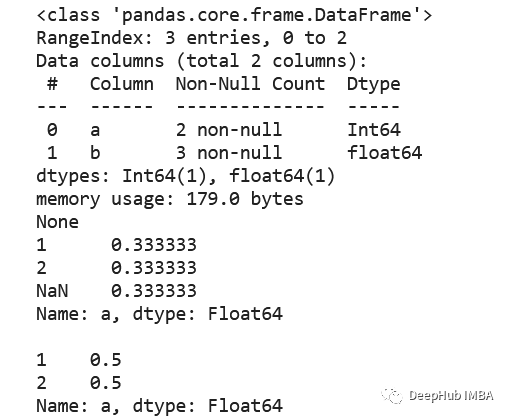

def pandarallel_way(data): pandarallel.initialize() data['out'] = data['in'].parallel_apply(target_function)4、空值,int, Int64

5、Csv, 压缩还是parquet?

| file | size | +------------------------+---------+ | triplets_525k.csv | 38.4 MB | | triplets_525k.csv.gzip | 4.3 MB | | triplets_525k.csv.zip | 4.5 MB | | triplets_525k.parquet | 1.9 MB | +------------------------+---------+

6、value_counts ()

df = pd.DataFrame({"a": [1, 2, None], "b": [4., 5.1, 14.02]}) df["a"] = df["a"].astype("Int64") print(df.info()) print(df["a"].value_counts(normalize=True, dropna=False), df["a"].value_counts(normalize=True, dropna=True), sep="\n\n")

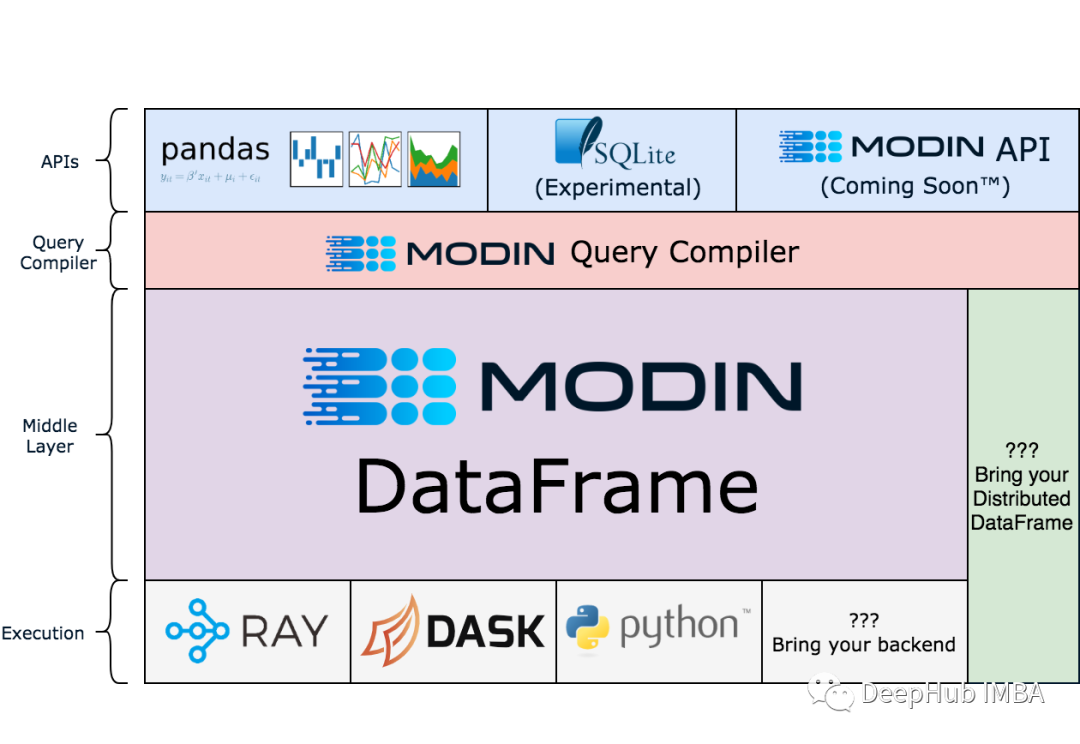

7、Modin

!pip install modin[all]

import modin.pandas as pd df = pd.read_csv("my_dataset.csv")

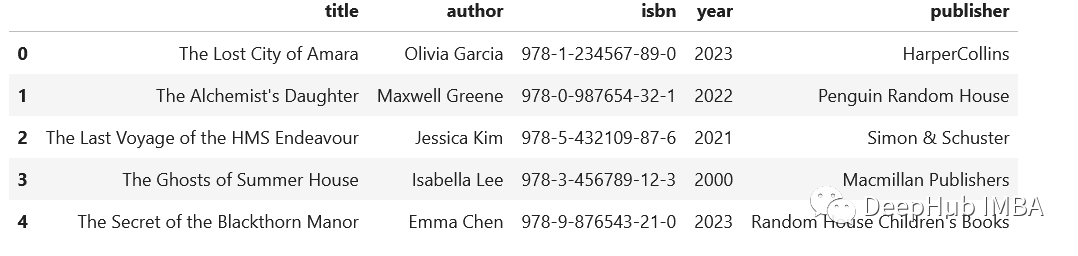

8、extract()

import pandas as pd

regex = (r'(?P<title>[A-Za-z\'\s]+),' r'(?P<author>[A-Za-z\s\']+),' r'(?P<isbn>[\d-]+),' r'(?P<year>\d{4}),' r'(?P<publisher>.+)') addr = pd.Series([ "The Lost City of Amara,Olivia Garcia,978-1-234567-89-0,2023,HarperCollins", "The Alchemist's Daughter,Maxwell Greene,978-0-987654-32-1,2022,Penguin Random House", "The Last Voyage of the HMS Endeavour,Jessica Kim,978-5-432109-87-6,2021,Simon & Schuster", "The Ghosts of Summer House,Isabella Lee,978-3-456789-12-3,2000,Macmillan Publishers", "The Secret of the Blackthorn Manor,Emma Chen,978-9-876543-21-0,2023,Random House Children's Books" ]) addr.str.extract(regex)

9、读写剪贴板

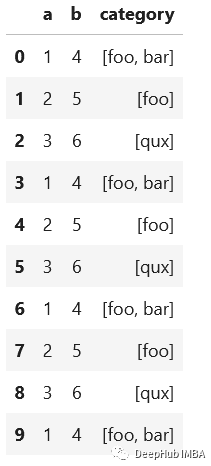

10、数组列分成多列

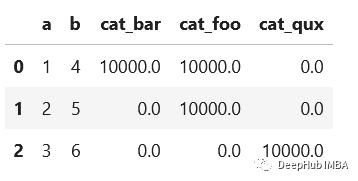

import pandas as pd df = pd.DataFrame({"a": [1, 2, 3], "b": [4, 5, 6], "category": [["foo", "bar"], ["foo"], ["qux"]]})

# let's increase the number of rows in a dataframe df = pd.concat([df]*10000, ignore_index=True)

def dummies_series_apply(df): return df.join(df['category'].apply(pd.Series) \ .stack() \ .str.get_dummies() \ .groupby(level=) \ .sum()) \ .drop("category", axis=1) %timeit dummies_series_apply(df.copy()) #5.96 s ± 66.6 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

from sklearn.preprocessing import MultiLabelBinarizer def sklearn_mlb(df): mlb = MultiLabelBinarizer() return df.join(pd.DataFrame(mlb.fit_transform(df['category']), columns=mlb.classes_)) \ .drop("category", axis=1) %timeit sklearn_mlb(df.copy()) #35.1 ms ± 1.31 ms per loop (mean ± std. dev. of 7 runs, 10 loops each)

def dummies_vectorized(df): return pd.get_dummies(df.explode("category"), prefix="cat") \ .groupby(["a", "b"]) \ .sum() \ .reset_index() %timeit dummies_vectorized(df.copy()) #29.3 ms ± 1.22 ms per loop (mean ± std. dev. of 7 runs, 10 loops each)